Prompt Injection: the short story

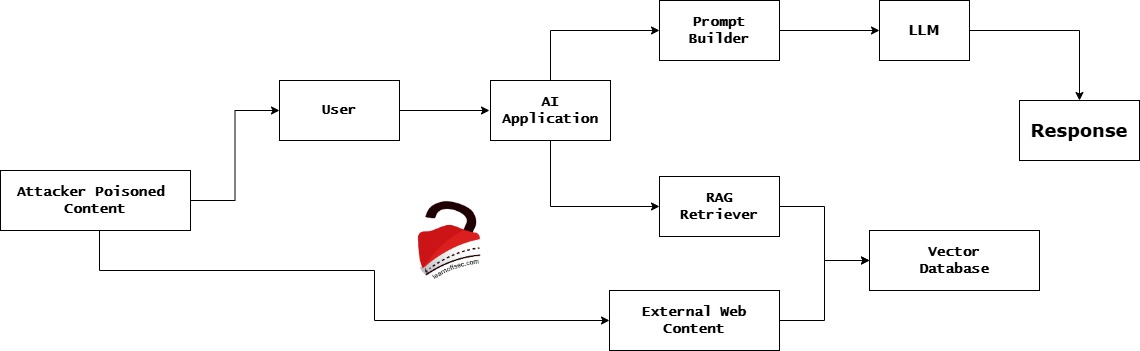

Prompt injection is an attack where an adversary hides instructions inside inputs (text, files, images, web pages, metadata, etc.) that a model treats as commands and the model follows them. The fundamental problem is simple-looking but deep: Large Language Models (LLMs) treat natural language both as data and as instructions, and attackers exploit that ambiguity. … Read more